Azure AI Legacy Integration: 5 Seamless Steps to Unstoppable Digital Transformation

For decades, many organizations have relied on powerful, custom-built, on-premises systems. These Monolithic Systems have been the backbone of global commerce. Today, the demands of the market have fundamentally changed. They require speed, personalization, and intelligence at every touchpoint. This new era mandates a commitment to Digital Transformation.

The challenge is clear. How can companies integrate cutting-edge cloud intelligence, specifically Azure AI, with these robust but rigid legacy architectures? The solution lies in a precise, non-disruptive strategy. This requires expertise in creating Seamless bridges. We must connect the old world to the new. This complex technical challenge is defined as Azure AI Legacy Integration.

Furthermore, successful integration is not just about technology. It is about architectural pattern selection. It involves rigorous planning and governance. This guide provides an expert roadmap. It ensures you can unlock true business value from your entire IT portfolio. We will detail how to achieve Seamless connectivity and sustained innovation.

The Imperative for Seamless Azure AI Legacy Integration

The motivation to integrate AI is no longer optional. It is essential for competitive survival. Companies that fail to inject intelligence into core processes risk being outpaced. They face an inability to personalize services or optimize operations. Azure AI Legacy Integration is the bridge to this intelligent future.

The Technical Debt of Monolithic Systems

- Rigidity: Changes are expensive, slow, and high-risk.

- Scalability: The entire system must scale, even if only one component needs more resources.

- Data Silos: Data is often locked away in proprietary formats or isolated databases.

Why API-First Strategy is Essential for Transformation

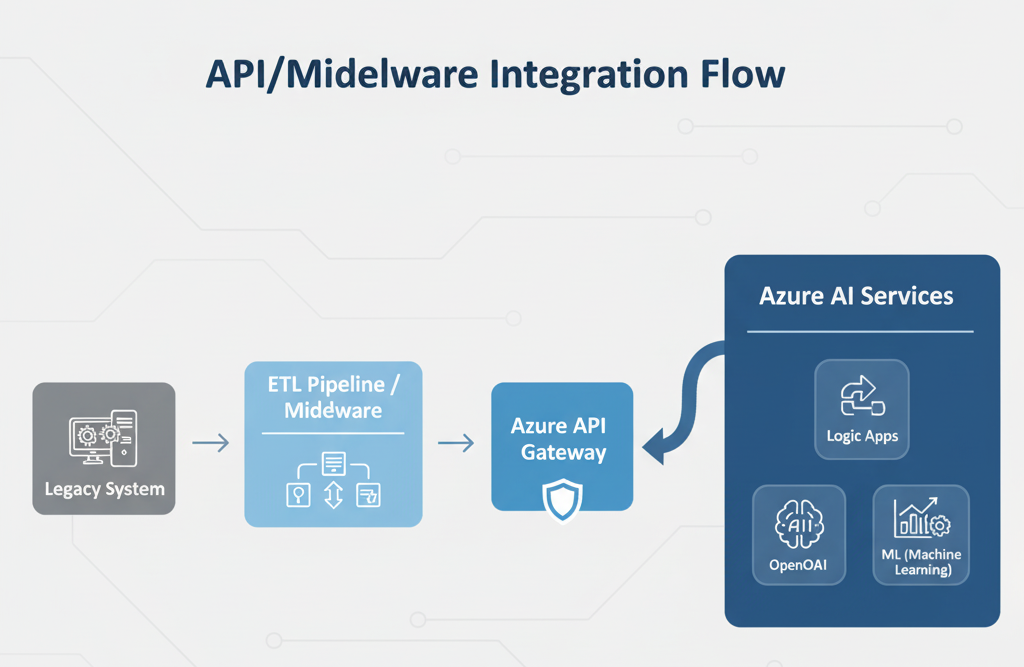

The API (Application Programming Interface) is the universal language of modern computing. An API Gateways strategy treats every legacy function and dataset as a service. This abstracts the complexity of the underlying Monolithic Systems.

Moreover, APIs provide a secure, standardized layer for data exchange. They allow new Azure AI services to access and update legacy data. This happens without ever needing to touch the core application code. This separation minimizes risk and accelerates development dramatically. This API layer is the first step toward true Digital Transformation

Phase 1: Auditing and Data Strategy for Digital Transformation

No Azure AI Legacy Integration project can succeed without a robust data strategy. AI models are only as good as the data they consume. Legacy data is frequently messy, inconsistent, and fragmented.

Assessing Data Quality and Accessibility in Legacy Systems

A thorough data audit is the starting point. Identify which mission-critical data resides where. You must clean, standardize, and de-duplicate existing records. Poor data quality introduces bias and inaccuracy into AI models. This can lead to flawed, costly business outcomes.

Transition words like furthermore highlight the importance of data governance. We recommend conducting a data readiness assessment. This evaluates your existing datasets against the needs of specific Azure AI services. The audit must map data accessibility. It must determine if data can be exposed via an API or if it requires an ETL process.

Centralizing Data through Modern Data Lakes

To feed AI models effectively, data must be centralized. Organizations should implement a data lake or data warehouse, such as Azure Synapse Analytics or Azure Data Lake Storage. This breaks down existing data silos.

In addition, an Extract, Transform, Load (ETL) pipeline must be established. This process converts legacy data formats into standardized, AI-friendly schemas. This provides the clean, consistent, and scalable data source required for training and inference. This centralization is fundamental to sustainable Digital Transformation.

Architectural Patterns for Azure AI Legacy Integration

Successfully integrating AI requires adopting specific, proven architectural patterns. These patterns facilitate communication between new cloud services and the old Enterprise Architecture. They prioritize decoupling and modularity.

Leveraging Azure API Gateways as the Connector

API Gateways are the strategic control point for Azure AI Legacy Integration. Azure API Management acts as a secure facade. It handles all incoming requests for AI services and routes them appropriately.

- Security: It enforces rate limits, authentication, and security policies. This protects the sensitive legacy backend.

- Abstraction: The Gateway translates requests and responses. The AI services see only a modern API structure. They do not see the complex legacy interface.

- Monitoring: It provides a central point for logging and tracing. This is vital for observing the performance of the integrated system.

This approach ensures the legacy system can remain functional. The AI components are developed independently in the cloud. We recommend reading a detailed guide on API strategy. Review the best practices for implementing API Gateways at learn.microsoft.com

Check out our “AI Revolution” Blog post here AI Revolution.

Microservices and the Strangler Fig Pattern

For the most rigid Monolithic Systems, the Strangler Fig Pattern is often the best choice. This gradual Cloud Migration strategy involves wrapping small, specific legacy functions in new Microservices.

Furthermore, these Microservices are deployed in Azure. They call the old system only when necessary. Over time, the new microservices “strangle” or replace the old monolithic code. This enables continuous modernization. This avoids the risk of a full-scale, “big-bang” replacement. This method is slow but significantly derisks the Digital Transformation process.

According to industry analysts, a phased approach radically improves success rates. Analysts at gartner.com consistently advocate for modularity in large-scale modernization efforts.

Harnessing Azure Services: The Technical Toolset for Seamless Integration

Microsoft Azure provides a comprehensive suite of tools designed specifically for hybrid integration. These services allow for the creation of durable, scalable connections for Azure AI Legacy Integration. This guarantees a truly Seamless experience.

Azure Integration Services (Logic Apps, Service Bus, Data Factory)

These services are the technical workhorses for connecting systems:

- Azure Logic Apps: Used to orchestrate automated workflows. It is perfect for rule-based, low-latency integration tasks, such as triggering an AI model after a record update in a legacy database.

- Azure Service Bus: Acts as a secure, reliable message broker. This decouples the AI workloads from the legacy system. If the legacy system goes offline temporarily, messages queue safely.

- Azure Data Factory (ADF): The primary ETL tool. It ingests data from on-premises sources, transforms it in the cloud, and loads it into Azure storage for AI consumption. ADF is essential for continuous data synchronization.

In addition, these tools manage complexity behind the API Gateways. They ensure data integrity and reliable transfer between disparate environments.

Integrating AI Models via Azure OpenAI and Azure Machine Learning

The goal is to infuse intelligence at scale. Azure AI Legacy Integration allows organizations to use state-of-the-art models. This is achieved without any on-premises hardware upgrades.

The Azure OpenAI Service provides managed access to powerful Generative AI models. Azure Machine Learning (Azure ML) provides the platform for building, training, and deploying custom models. Both services can be securely accessed via Microservices and API calls. This enables real-time decision-making. For instance, a legacy system can call an Azure ML model to get a fraud score. It receives the decision instantly via the API Gateway. This forms the foundation of modern Enterprise Architecture.

Governance, Security, and Compliance in Azure AI Legacy Integration

Integrating AI into core business processes introduces new security and compliance risks. A Seamless integration must include robust governance from day one. This is non-negotiable for true Digital Transformation.

Implementing Zero-Trust for Hybrid Environments

Traditional network perimeter defenses are insufficient. A Zero-Trust model assumes no user or service can be trusted by default. This is critical in a hybrid environment mixing cloud and on-premises components.

- Authentication: Every service call, whether from a legacy system or an Azure AI model, must be verified and authorized.

- Micro-segmentation: Network access is limited to only what is absolutely necessary for the task. This minimizes the blast radius of any potential security breach.

This enhanced security posture is a necessary investment. It protects decades of stored data within the Monolithic Systems.

Data Governance and Handling Sensitive Information

Data Governance and Handling Sensitive Information are critical aspects of modern data management. Data Governance is of utmost importance in today’s digital landscape. When integrating sensitive legacy data with artificial intelligence systems, it is essential to ensure compliance with relevant regulations and standards. This necessity involves establishing and maintaining clear and comprehensive policies regarding data residency, robust encryption protocols, and stringent access controls to safeguard the information effectively..

Moreover, it is essential to clearly identify and define who holds responsibility for maintaining data quality. The Azure AI Legacy Integration process typically involves the important steps of anonymizing or masking sensitive data fields prior to their incorporation into the AI model. Effective Data Governance guarantees that the results produced by AI systems are not only accurate but also adhere to necessary legal compliance standards. It is highly recommended to consult external expert resources related to this topic for more comprehensive insights. For an in-depth exploration of compliance frameworks, please refer to the Data Governance insights that are available on ibm.com.

Overcoming Performance Bottlenecks with Intelligent Architecture

Performance issues are one of the most common failures in AI integration projects. Monolithic Systems simply cannot handle the high-throughput, low-latency demands of real-time AI. The architectural solution involves smart workload placement.

Offloading AI Workloads to the Cloud

The fundamental principle of Azure AI Legacy Integration is to shift the computational heavy lifting entirely to Azure. Training a large language model or running complex simulations requires specialized hardware. This is the supercomputing power Azure provides.

In addition, the cloud handles the inference (the use of the model). The legacy system’s only job is to provide the input data via the API Gateways. It then receives the prediction or insight back almost instantly. This removes the performance burden entirely from the on-premises environment.

The Role of Edge Computing in Reducing Latency

For highly time-sensitive applications, even cloud-based inference may introduce unacceptable latency. This is where edge computing becomes vital. Edge computing runs smaller, optimized AI models closer to the source of the data.

For example, a manufacturing plant using predictive maintenance could run a small Azure AI model on a local server (the “edge”). This allows it to detect anomalies immediately without waiting for a cloud round trip. This hybrid approach guarantees Seamless performance for critical operations.

Scaling the Future: Continuous Modernization and the Seamless Payoff

The goal of Azure AI Legacy Integration is not a one-time project. It is about establishing a permanent engine for innovation. The correct Enterprise Architecture allows for continuous, low-risk evolution.

Building a Culture of Continuous Digital Transformation

Technology is only half the battle. A culture shift is required to maintain the momentum of Digital Transformation. Teams must move from being gatekeepers of the Monolithic Systems to enablers of Microservices and cloud innovation. This includes adopting DevOps practices and fostering collaboration between IT and business units.

Furthermore, leadership must commit to funding not just new features, but the continuous refactoring of technical debt. This commitment is the key to maintaining a Seamless flow of data and services.

The ROI of Effective Azure AI Legacy Integration

The return on investment (ROI) is significant:

- Cost Reduction: Automating manual processes and reducing maintenance on legacy components.

- Increased Revenue: Enabling personalized customer experiences and faster product development.

- Risk Mitigation: Improving fraud detection and compliance through intelligent systems.

The strategic value of this integration is the creation of a future-proof Enterprise Architecture. It ensures your business remains competitive for the next decade. For a view on how industry leaders are leveraging this, check out the analysis from techcrunch.com on major enterprise AI strategies.

Effective Azure AI Legacy Integration drives competitive advantage. This Seamless approach allows companies to deploy powerful, custom AI applications. This capability transforms business processes, moving them from reactive to predictive.

Addressing Common Deployment Questions

❓ Frequently Asked Questions (FAQ)

Q1: Is the Strangler Fig Pattern mandatory for moving off Monolithic Systems?

No, the Strangler Fig Pattern is not mandatory, but it is highly recommended for large, mission-critical Monolithic Systems where risk must be minimized. It is the safest method for achieving Cloud Migration piece-by-piece. Other approaches include “Lift-and-Shift,” which is faster but involves higher risk, or “Re-platforming,” which upgrades the operating system or database. However, Strangler Fig, combined with API Gateways, offers the most Seamless path to modern Microservices and ultimately, successful Azure AI Legacy Integration. It is the gold standard for Enterprise Architecture modernization.

Q2: How do I handle real-time data synchronization between Azure AI and my legacy database?

Real-time synchronization for Azure AI Legacy Integration typically relies on Azure Integration Services.

Specifically, you’d use Azure Service Bus to act as a reliable message broker. Azure Data Factory remains ideal for any necessary batch synchronization.

When data changes occur in the legacy system, a small Change Data Capture (CDC) mechanism or database trigger starts the process. This sends an event directly to the Service Bus.

Azure Logic Apps then pick up this event. They use it to update the relevant data store in Azure. This might be Azure Cosmos DB or a search index.

This workflow ensures the Azure AI model always accesses fresh, up-to-date information. Maintaining this currency is critical for real-time Digital Transformation applications. Examples include fraud detection or inventory management.

Q3: What is the main role of API Gateways versus an Enterprise Service Bus (ESB) in this architecture?

In modern Enterprise Architecture, API Gateways often replace or enhance the traditional Enterprise Service Bus (ESB). While the ESB focuses on internal service routing and transformation, the API Gateway, like Azure API Management, emphasizes externalization, security, and governance. It serves as a secure entry point for Microservices and Azure AI components, managing rate limiting, authentication, and policy enforcement. Although ESBs can handle complex internal routing, API Gateways are essential for cloud access and security.

Q4: How long does a typical Azure AI Legacy Integration project take?

The timeline for Azure AI Legacy Integration varies significantly based on the complexity and rigidity of the Monolithic Systems. A focused pilot project using pre-built Azure AI services (like sentiment analysis) and an existing API Gateways structure can be deployed in 3 to 6 months. However, a full-scale Digital Transformation to Microservices using the Strangler Fig Pattern and covering core business functions can take 18 months to 3 years. The key is to demonstrate quick wins with high ROI early on, often by targeting a specific, high-impact use case, such as improving customer service with an Azure AI powered chatbot.

[Quote Block on AI Strategy: “The most successful firms don’t replace legacy; they strategically encapsulate it. AI provides the perfect motive for this long-overdue architectural change.” – Industry Senior Analyst.]

Conclusion: The New Era of Seamless Enterprise Architecture

Azure AI Legacy Integration is complex, but a Seamless future is attainable. By focusing on API Gateways, adopting Microservices with patterns like the Strangler Fig, and utilizing the Azure AI platform, organizations can move beyond Monolithic Systems. This approach represents not just modernization, but a Digital Transformation that integrates intelligence into Enterprise Architecture for a competitive edge. The time to create these connections is now.